[Warning: Longread]

On the ails of the Basel-IV ORM proposals:

1. Unwarranted, certainly unscientific overreliance on ‘models’;

2. Modeling for prospective use in stead of hindsight understanding;

3. Too much top-down, not enough bottom-up;

4. No humans in the picture, hence the wrong and unactionable indicators.

Introduction

About all of the banking industry, and other financials in their wake, have had to deal with loads of regulatory requirements. Justified, some say, for ‘they’ cause(d) so much misery beyond mere most temporary loss of bonuses that the ‘un’ should be (have been long before) detached from bridled. So, Basel II and -III regulations swooped in requiring much more explicit and detailed handling of financial business than ever before. The move from laissez-faire to regulation, to regulation with sanction schemes, to sanctions (possibly interpreted as ‘token’…), was extended with provability and then complete proof-demonstration as minimum requirement.

This all, however, has created a large, and in general even I would say quite overpaid [disclaimer: am profiting too] industry of consultants, quants, ‘risk managers’, reviewers, assessors, auditors, and scores of Toms, Dicks[1] and Harries of the GRC kind. That are all very likeable nice lads and lassies, but maybe not all quite worth their salt, certainly not their bonuses, or even be sure to be worth much lending one’s ear to.

Since March, suddenly, there’s news. The Basel Committee on Banking Supervision has released a consultative paper on ideas for (much-needed, many know) simplification of the operational risk management part of regulations. For Basel-IV forthcoming.

[Before you know it, some rogue ruins your holiday. Like Claudius Civilis, now at the Rijks Amsterdam]

Now I don’t mean to repeat the rather clear critique that was around on the Internet already, as that was focused mostly on the migration towards the new proposed Standardised Measurement Approach. E.g., that so many had expertised themselves to such a high degree and that might now become wasted effort (too bad; such is life in a regulated environment), that the theoretical or practical underpinning is lacking (it is, for reasons below), the new formulas are not forward-looking (in another light, a plus; see below), that no ‘improvement’ in ORM practices may be achieved (for reasons also clarified below) , that VaR is a fake (true; see below) and that scenarios were underweighted (also true and see below).

Of course, it is a good thing that BCBS recognises the partial ineffectiveness of ORM as it stood in the past requirements and the need for change. Even if for not entirely all the right (listed) reasons…

Previous strays

The chief one (as noted also in the above critique) being that previous requirements had focused so much on financial figures — whereas the O in ORM is for a major part non-financial, in its causes, mechanics, outcomes, and manageability.

This requires a reconsideration of ORM as comprising ‘all’ the risks to be associated with people (well…) being assembled in buildings or not, to work on the assembly lines of information flows through the organisation. At any level, it’s no more than that. Decisions? Aren’t they supposed to be mere mechanical switchboard results of rational arguments and well-founded formulae…?

But however framed, it is clear that modelling the causes, and modelling the mechanics (i.e., how some somewhat random time-variant set of root (sic) causes (multiple) in somewhat random sets of time-variant and feedback-looping combinations lead to outcomes, positive and negative), is inherently incomplete. Let alone that the modellers most often have little clue about the psychological intricacies of the human actors nor of the common behaviour of formal procedures and IT apart from the intended tight-rope walk over some chasm. It is my belief that no amount of modelling may be sufficient when exactitude is required. Question: What extreme level of modelling of all of a major bank’s individuals activities would have captured the LIBORgate vulnerability ..?

As one would also have to include the weaknesses of individual and combinations of controls, inherently so (“imperfect cross hedge”) and through imperfect implementation and maintenance, and through the cost/benefit balance which by lack of demonstrable/hard benefits, often leads to underbudgeting of necessary controls. And avoidance behaviour by the very ones that the controls were directed against.

One should have had a warning signal already from the Basel II/III approaches for operational risks with their indication of classification (Annex 9), as expected taken by many to be the rule as it turned a matter of ‘comply or die explaining’. That should have been expected or one wouldn’t have had a suitable understanding of the GRC industry, but was and is unfortunate since the indication of possible classification was so badly thought out: Not orthagonal (and consider that under the surface of all non-ops risk/loss types, there’s an ops risk/miss affair going on), qua details heavily skewed towards some operational risk causes and or effects, no room for the time-variant changes in those (in the classification itself), no consideration of the variation of effects, or feedbacks or other complexity of any ‘single’ event, on the other hand a lack of detail suitable for actionable management reporting, etc. The ‘events’ as to be dealt with, inherently just were and are too complex to capture in such a flat classification.

And through this, the resulting data wasn’t fit for use anywhere — also not for industry-wide benchmarking as any institution necessarily had their peculiarities in registering ops losses making them sufficiently non-standard to be incomparable with and unusable at other institutions. Plus the directive to have all sorts of managers turn themselves in for ops losses in their quarters… Even where ‘independent’ accountancy was used, the source data was provided (if) with all the wrong incentives so what accuracy or completeness expectance could one have had ..?

This all is a demonstration that the risk under consideration is one of common and specific operations in both their generic (and partially uncontrollable) aspects and in their defiance nay very opposition to some portfolio approach. There simply is no hedging within any (sub)portfolio of operationla risks. One could hardly even out any risks by means of required margin on all activities compensating occasional major losses, since there is no ‘return’ or ‘interest’ to be had from individual activities. One holds no operational risk activities for some hopefully positive return. (Though the idea of loss avoided through control activities such as Audit goes a little way towards that, despite being very hard to quantify by the very nature of creating invisible, unmeasurable near or far misses not financial contributions towards ops loss compensation.)

So, unfortunately, operational risks cannot be managed as if they’d be in a portfolio of similar or same products, hedgeable or even tradable[2], but must be dealt with on a case-by-case basis. And there the full scope of operational risks come into view again: Any ‘event’ is not a (relatively) little monetary investment that goes under, but a most complex set of external and internal events and actions that in harmony (rather, symphony) work against and for the organisation’s interests in all sorts of ways, varying over time, with all components also varying in their contributions over time and with all sorts of hard-to-determine feedback loops. Even all business and support processes and procedures as intended, aimed at achieving objectives not controlling non-performance, don’t work without an overwhelming dose of grease by way of non-official business dynamics. Let alone that deviation from the ideal will stick to the ‘ideal’ scenarios of models. The finer the mesh of controls, the more loopholes will exist; a physical mesh net works in 2D, here we have 3- to 4+-D meshes …

Plus, preventative controls, the preferred kind, incur serious costs everywhere without being compensated by revenue — loss avoided isn’t net cash inflow! Classification is (very) hard, and (hence) no sufficiently precise PD, no LGD, no EAD (!), hardly any EL (?) can be had other than predicting major UL… So no RWA, no risk-weighted capital, no broadside control through that.

And, (should be) cheers all around, also no VaR — contender for the worst indicator and approach ever developed.

Only if (not when) one would take the financial market equivalent of studying the assets invested in ‘fundamentally’ and weighing all (…) the ways in which any such asset might fail internally to lead to default — then considering those studies for PD, et al. When done for one’s own organisation, this would require studying one’s own business processes in all their detail and establishing the risk of failure at each and every minute detail, to come to the conclusion that all processes are at a high (+) risk of failure. Only then can one speak of a portfolio of business processes at risk, still kept in portfolio for their (on balance) positive contribution to the overall organisation portfolio. But then, this is the operational risk analysis in all its glory (quod non) as sketched above; bound to be imprecise, bound to be uncomparable with other banks, bound to be qualitative… the horror (to some).

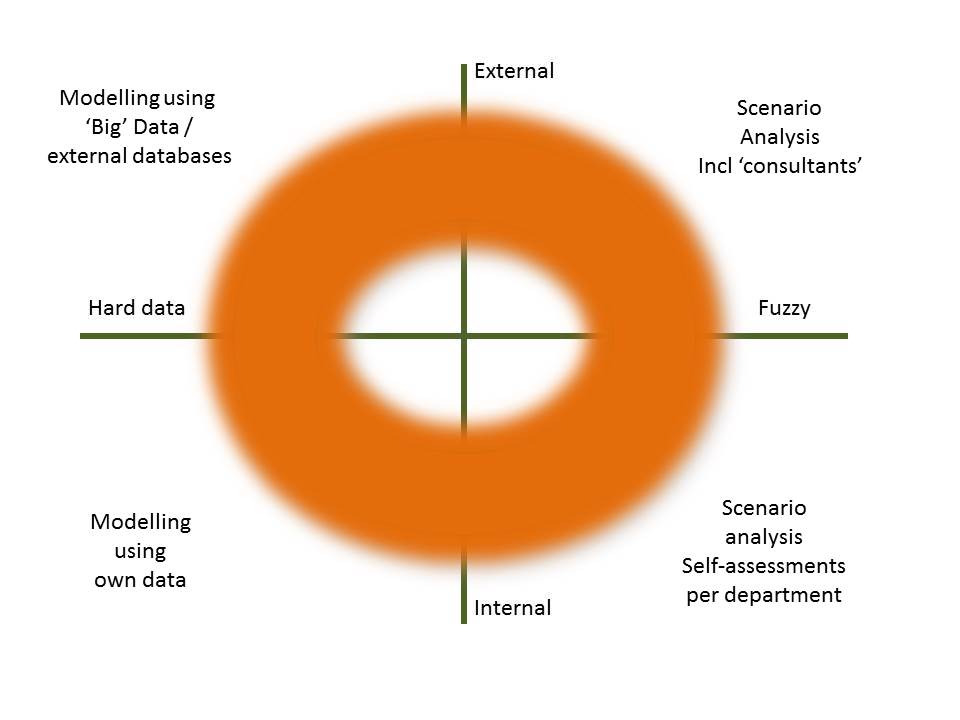

Is there no value to quantitative data analysis ? Wel, maybe, some. But what would be needed, is a balanced approach. Get yourselves a donut …:

In which one seeks to weigh what one learns from various sides while not lingering at the origin of innocence, using all (to be made) available information one can get, and then learning the resulting shape of things risky. The colour not being an indication of overall risk but I’m Dutch (and O’s fan quite a bit, too). How to do this weighing, probably is an art, or craft, not a science. But we shouldn’t worry too much bout that; quantitative fineries have always proved to veer off into the ridiculous in ‘economics’ i.e. the subset of sociology and psychology that we’re dealing with here. In the tension between those that want to truly manage from their hearts, and those that are too fearful of anything to not want totalitarian ‘control’ (as here).

So, we have a need for a modicum of quantitative figures for reporting, assessment and comparability but also a requirement to keep it simple (full Einsteinian way). Will all this be solved by using the new Business Indicators, a small set of financial outcomes ..? Wouldn’t it have been wiser to focus on the generic part, away from mere banking? One should remember that despite all pretense that haute finance might teach a lesson about how to handle operational risks, the latter has been around from times immemorial. Fires petering out, bears entering the cave, that sort of thing. Your colleague may not have evolved much beyond such business, but industries that were based more in industry have, and have much to teach. E.g., to set hard (guiding rail) boundaries for the risks one cannot allow to go unchecked like danger to human life and limb, but to have only dotted lines to keep ‘normal’ operations going straight and monetary losses in check. A refreshing switch compared to banking priorities ..? At least, something to be studied. And consider that the decades, maybe centuries, of the other industries’ experience and catharsis haven’t lead to some ‘portfolio/financial/Business Indicators’ approach. Yes I’d rather have BIs than nothing, but that’s more of a Hobson’s Choice; an obvious visible error being slightly better than a many headed and buried one.

Where Basel II-IV modelling for various financial risk categories may do very well with the idea that a couple of needles are compensated by the remaining general edibility of the hay stack and the needles are recognisable as such, in operational risk the few inconspicuous needles render the whole hay stack inedible, a potential major or damaging loss. Financial markets products have been standardised in order to be able to have them in neatly classified portfolios and subcategories, managed statistically. Operational risks … are not tied to tradeable products nor are they amenable to such statistical management. No silver bullet overall, no magnet to draw out all needles.

In the end, it may be that the essence of risk (any risk other than the trivial) is its unpredictability. From the vast universe of inherent risks in all their pesky detail (no employee operates like her neighbour), the precious few you can control (tightly) with controls that are hard and fast, are but a drop in the ocean — and cease to be truly risks once you control them, only the remainder risk is and will never be zero.

Plus… all of the statistics (so far…) have not included much if anything about the first 100+ percent of any business actually executed, being human work [DAOs aside — still, similar] or nothing is achieved with all the overload of ‘processes’ in your organisation. The concrete offices will sit empty and idly, all the paper reality and SAP systems; same.

Doesn’t compute

Hence, formulas are the mantras of the ill-informed — believers in the mantras themselves, the very outward appearance against which the fundamental understanders will warn against. This is suppleted by a complete lack of understanding of the nature of modelling. Which is to get a grip on reality, not to fill the inherently outdated models with inherently outdated data. Both models and data will fit each other snugly — a most common error but a huge one, in any science. See, I do think that operational risk analysis is actually useful: To learn and understand how things work. To be able to understand. And then to leave the models by the wayside as they are uncertain at a secondary, abstraction layer, too.

But with ‘Basel IV’ as with previous versions, we’re dealing (or aiming…) not with positive historical description (wasn’t that what bookkeeping was for ..?) and mere reporting to hang oneself by the capital requirement burden but with normative, forward-looking ‘calculations’ that are bound to be imprecise. And we have no (sic) clue as to how inprecise these ‘predictions’ will be, self-defeating as they must be by having the mechanisms for that, built in; because controls will be implemented (or relaxed) based on model outcomes that by necessity alter the model.

To which your counterpoint of signalling the partiality of your contribution and the serious contingency of your deliverables, is lost in the thick of the internal fights again anyway minus the blame that will come back to get you.

Yes, I am aware that the current wave of ‘get real’ Basel ORM does diminish the overreliance on historic data and analysis paralysis modelling somewhat. But by much, not enough. And by much, underacknowledging the value of approach changes required. Why not explore the use of Fuzzy Logic in all its mathematical brille ..? Why not investigate the use of signals theory? The value determination of information, anyone ..? The inclusion of the sobriety of strategic planning should be there, too.

Next, one could even consider again the human part of human nature. Culture… whether organisational va banque or innate arteriosclerosis, or either individual CYA or ‘all of them dunces, I’ll use but not show you my cleverness’. Can we model this? Absolutely; the art of psychology has achieved much, more than often recognised. Add a dose of organisational and societal sociology, blend in a group behaviour cauldron and heat to boiling point. Finish with a couple of pinches of moral and ethical philosophy that you have available from your personal liberal arts upbringing. In this, Are you modelling experienced ..?

This latter thingy may, for the time being, be made operational by … drum roll … including a circumscription of the ‘problem’ of P-eople in your organisation. If each and every fte is a locus of operational risks, why not include them as such in any formula you’ll need to achieve the bare minimum of comparability between units and companies?

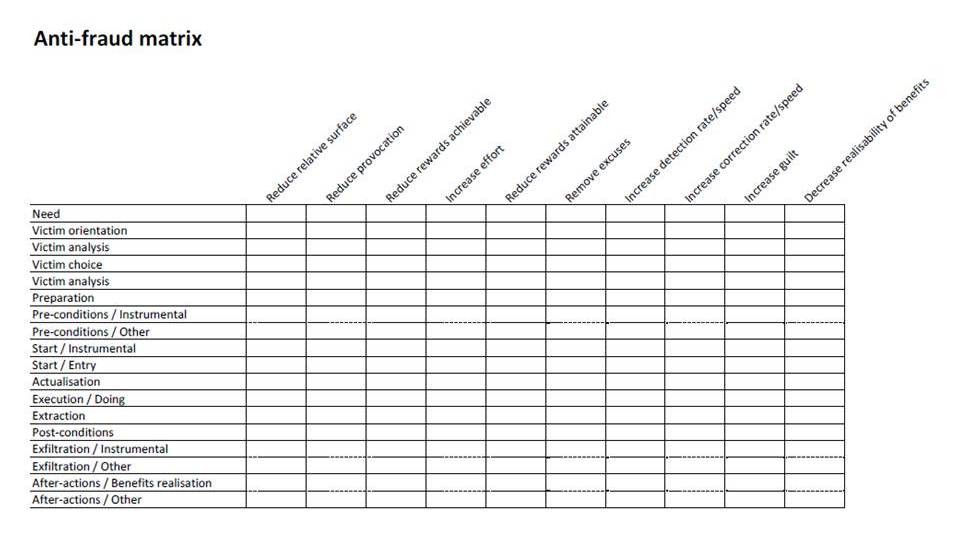

Intermezzo 1: Many bottoms up

Besides, there are a lot of bottom-up style models and methodologies that can help a lot, but would lead to individual, one-off results for operational risk management. The need for top-down frameworks, a child’s hand is easily filled, would be lessened swiftly if one would better study and use the learnings of those that have been at the forefront. Not necessarily trenches, recently, but still, know their way around the thick of the fight. One could take, e.g., Bruce Schneier’s approach to boundary settings. And add a dose of patchwork and stuffing with, e.g., filling out the following on a case-by-case basis, hardest and most effective areas first:

So that one doesn’t do too much windmill chasing, but does do the targeted firefighting (in a controlled, well-planned manner…) that comes with being in business in an inherently dangerous world. Again, this will only work if done at the local levels of every corner of the organisation, and will definitely fail if approached centrally, top-down.

But in the end, just as culture eats strategy for breakfast, so will uncertainty eat your ‘control’ as a coffee biscuit. Que sera, sera.

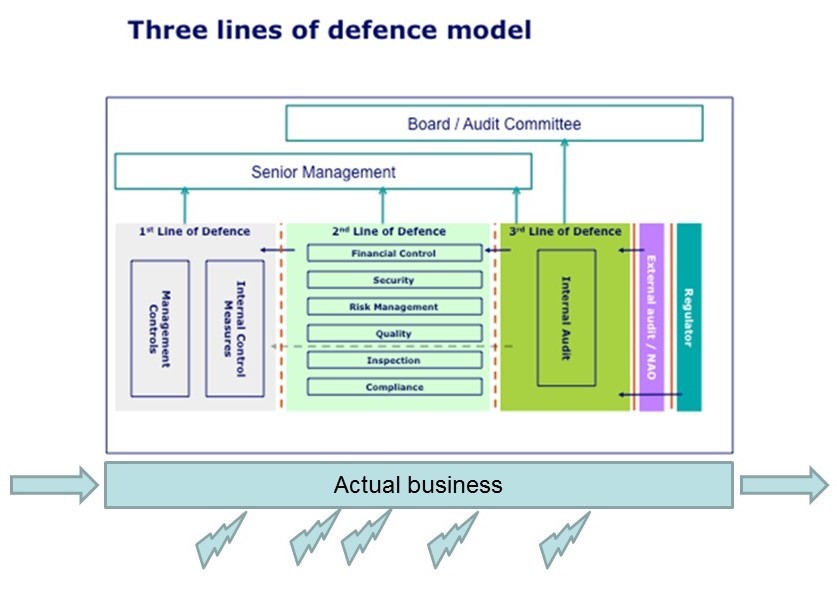

Intermezzo 2: Not the TLD

On a tangent, why not acknowledge that the first of three lines of defense have failed by not being anything like that. No, lines of defense would stand between threats and vulnerabilities. Would they, in the TLD schemes attempted? Where also the first line (to bore: the one which actually makes money for a change) is the one doing actual management to be understood as brushing off the roughest edges of shop floor expert work. No more. Second line: In order to be effective, not bothering the first line with ‘coaching’ i.e. micromanagement to irrelevant (sic) top-down petty work, but bothering itself with most discreetly, next to unnoticeably supporting the first line managers. Third line: even more so. Even if the latter both have pretensions… still being the mouse walking across the bridge alongside the stamping elephant: “My, aren’t we making a noise!”.

And not mentioning the minions overshouting their own insecurity by begging to be GRC staff, thirsting for an ear of truly governance level executives. The latter being bothered by all sorts of same and other sycophants that babble about their so wrongly assumed, well, almost birthright of a seat at the Board table. To which all one would want to say something along the lines of Get a Life. And study Lord Chesterfield’s Letters better.

Besides, author would very kindly ask anyone for pointers to information that demonstrates that the third pillar, market discipline (onto itself, an oxymoron), or even the fourth or fifth lines of bureaucracy, have helped in ‘controlling’ operational risk.

But that’s just my Carthago destroyed, and I’m not negatively complaining am I? Anything that makes the tide rise higher, will directly and/or indirectly lift my boat a bit more, too.

Back: To Evolve From Here

So, what to do about operational risk ..? Maybe from a regulators’ perspective (sic): not much. Require that an organisation will have its frameworks in place, and implemented. Auditable by auditors, that will have to do the grunt work of evidence collection themselves, not proof-as-ultimate-outcome style with tons of file compilation to prove one’s own innocence. And recognise that this may not enhance comparison with peers but that’s too bad. Better see that all individually do their best to catch LIBOR-gate class crimes and misdemeanours than that all smother in unusable overhead. So, the retreat via the BI-road of the consultative paper is necessary and honourable, not a loss nor a desired outcome but a tactical regrouping to be able to charge from some other direction.

Next, let’s all hope that the preferences to see centralised implementations of ISO 310xx blows over like an unwanted because most ineffective dust cloud. Rather, have the most decentralised embedding in the ‘first line’ to the extent possible, with some, almost hapless, second line of facilitators and report collectors; not for the purpose of control but for upward feed of overview[3]. No more.

Operational Risk-weighted Capital… yes please if directed at creating reserves for actual losses to be expected not as liquid market portfolio of returns at risk. The BIs possibly serving as proxy for those, though better shorthand estimates (ratios, I guess) should exist. With the inclusion of fte’s as rough-cut measure (but what can you do, without much more detail, yet) of operational risk, a much better measure even than just turnover or revenue or what have we. And with wise and precise compensation that the ones with more authority and authorization (higher up, and hiding in dusty corners of the organisation too, often) qualitate qua pose a larger operational risk translatable to financial risk than just any clerk, resulting in Board members being huge risk factors indeed.

The capital thus held, not to be invested as fixed ballast, but as reserves to be released (and rebuilt) to counter actual losses on the P&L. Business As Usual. So now, let’s all get Basel IV compliant…?

[ I do welcome your on-topic comments truly very much thank you in advance, and structurally your recommendations may be are needed, too.

And in a next post, I may want to extend this thinking into the cross-over of Basel old-style (?) ORM into Information Risk Management, Information Security and vice versas. A.k.a., how to jump Kuhnian paradigms. ]

1 Especially a lot of those, suspected by many of compensating.

2 Insurance is often mentioned but in practice isn’t implementeable as hedge! It is a hedge for event ‘LGD’ only, possibly, if all costs of controls improvements have been made already otherwise often any event is not covered. Also, insurance is blanket hence unrelated to any event class (if there’s such a thing…) and no incentive to take care.

3 This, as proposed in ISACA’s CRISC body of knowledge; the more so as ‘cyber’crime continues to drive large parts of ORM.