Recently, was reminded (huh) that our memories are … maybe still better than we think, compared to the systems of record that we keep outside of our heads. Maybe not in ‘integrity’ of them, but in ‘availability’ terms. Oh, there, too, some unclarity whether availability regards the quick recall, the notice-shortness of ‘at short notice’ or the long-run thing, where the recall is ‘eventually’ – under multivariate optimisation of ‘integrity’ again. How ‘accurate’ is your memory? Have ‘we’ in information management / infosec done enough definition-savvy work to drop the inaccurately (huh) and multi- interpreted ‘integrity’ in favour of ‘accuracy’ which is a thing we actually can achieve with technical means whereas the other intention oft given of data being exactly what was intended at the outset (compare ‘correct and complete’), or do I need to finish this line that has run on for far too long now …?

Or have I used waaay too many ””s ..?

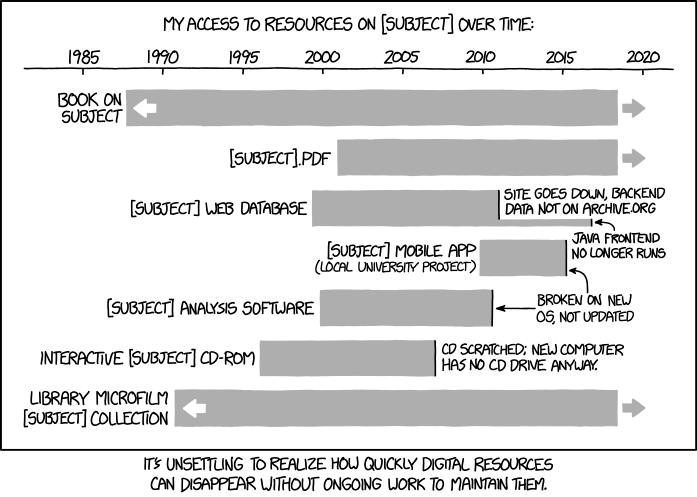

Anyway, part II of the above is the realisation that integrity is a personal thing, towards one’s web of allegiances as per this and in infosec we really need to switch to accuracy, and Part I is this XKCD: