Is this management, or control?

[Hospital St. Pau, Barça]

Just a note, on errors (not minor omissions) that I see over and over again:

‘Management’ ≠ ‘Control’

Rather, Management >> Control. Control is not allowing exceptions or deviations of predetermined values beyond a predetermined bandwidth. Management is dealing with whatever value comes out of your measuring system, whether one can steer the inputs to and operations of the production system so the outcomes come back into the fold or not.

Control, one does out of fear. The fear of not being in control (sic) over one’s own destiny, as if that is completely tied up with the workings of the ‘managed’ system, as if systems Compliance were the ultimate you can achieve in life. If so, one should discuss suicide; that shows utter control as well as categorical prevention of any future mishap to one’s plans. Control is about the fear of not being able to deal with deviations for which there is no rule, i.e., for which one as a drone following set detailed orders only (less than a robot, like an inhumane not really thinking let alone ethically operating machine); showing one’s incapacity for dealing with the real world. Admitting one would be happier as the subject in the Truman Show. Not wanting to escape.

Management, as above, is about being capable of understanding the system(s), and being able to see the innate instable nature of life (as a system, too), and dealing with all that comes at you through insight. Having ‘control’ and the rules for the set pieces, but having that, too, for the petty little uninteresting parts of life. The rest, is an adventure. Bring it on!

So, management is so much more than just mere simple control. If one would look down on those that desperately vie for control over … in their ‘management’ positions, a wry smile will do. Avoid them. Spiritual death by crushing bureaucracy is what they spread.

Which, by the way (?), clarifies also our message, in many a previous post, that ‘risk management’ gets to be more and more what ‘first line’ ‘management’ is about in these times of knowledge workers / professionals. The latter, know very well what to do and how to do it; probably better than you (because you apparently were/are more valuable not doing it yourself…). Hence, they require you to defend them against the outside world, starting with the other departments around them. And, your job as manager is to just grind off the rough edges of what your teammates (sic) do as the knowledge workers are managing their work not controlling it – the uninteresting controllable part they leave to machines (and indistinguishable AI ever more).

Facilitate, grind off the rough edges, and defend against ‘asteroid’ impacts from all around. [Actual decisions, cutting through dilemmas (if none, than it’s mere logic), is Leadership work, not for shop-attending managers.]

The latter, of course, is risk management. NOT to be done in separate ‘second line’ departments; if so, they’ll be useless overhead burdens (and much cost!), only to be facilitated, coordinated there – when not if first-line managers think they can do it better via their own methodology and practices, they are right…

Borne out, after writing this, by Seth’s blog again.

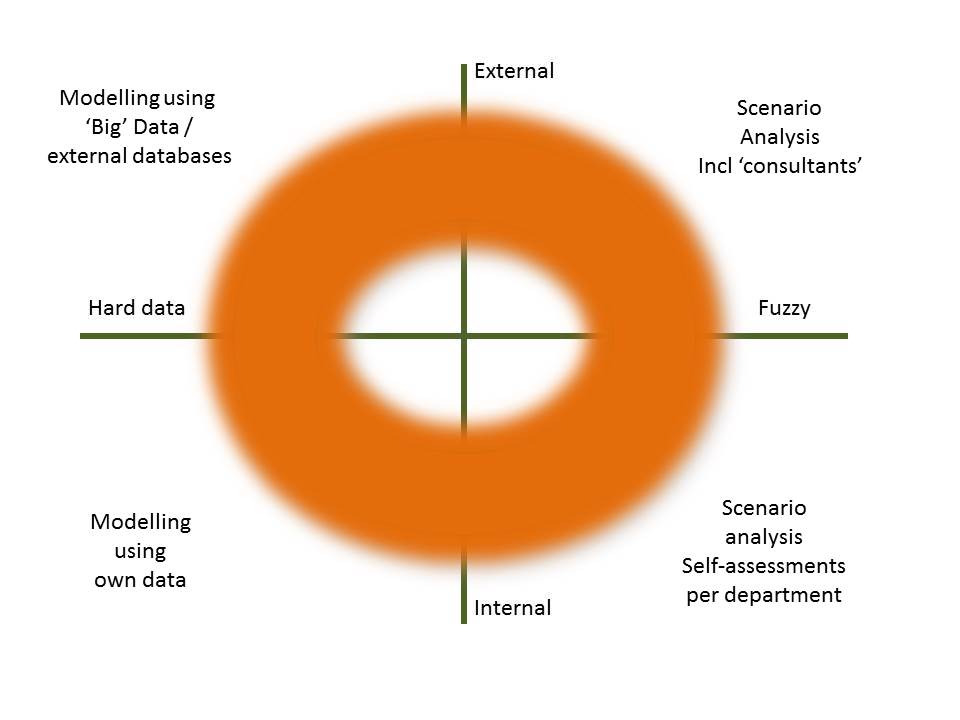

So, since they started, are hospitals about management or control ..? Well, control what they can, i.e., the taking away the negative impacts of outside air as at St. Pau by bringing the hospital under ground (yes in the picture, it’s the roof that appears to be street level ..!) and also enabling fresh air to be enjoyed by those who’d want to wander around on-site, both functions in one place not spread out. But not controlling (here in the picture!!) the health of their patients, managing that. Apart from postponing (sometimes for quite a while) the inevitable, do doctors heal, or do they create the right setting (incl. medicine and surgical changes) for their ’employees’/systems/patients to do their thing, the healing they want to ..?

[Edited to add:]

Oh by the way, yes I do realize there’s more to management of course, in particular middle management, on the side of ‘defending’ one’s department and getting sufficient and right resources, and stuff. Reporting, less so. As if that were the goal… Etc.

Upper management (sometimes dubbed ‘governance’, wrongly, as in this post) has more coordinating tasks, and more leadership to display, and a more mint picture to present to the outside – which includes showing the dirty bits ..! (think that one through)

![20130211_144900[1]](https://maverisk.nl/wp-content/uploads/20130211_1449001.jpg)