Als groeidocument, voortgekomen uit eerdere posts maar nu wat netter uitgeschreven en her en der wat aangevuld – en hier zullen dus aanvullingen volgen..! het volgende:

Standaarden en voorgeschreven controls zijn prima. Totdat ze zó dwingend en nauw worden geïnterpreteerd, dat ze de good people hinderen. Dan gaan diezelfde medewerkers binnen uw organisatie immers op zoek naar manieren om, ondanks of om de beperkingen heen, toch gewoon hun werk te kunnen doen. De ‘positieve’ opdracht om het werk gedaan te krijgen, om productief te zijn, men wordt immers betaald om de organisatiedoelen dichterbij te brengen, is sterker dan de ‘negatieve’ randvoorwaarden. Die laatsten, die kennen we allemaal als de traditionele ‘controls’, de rails voor een trein die bepalen exact waar mag worden gereden. Met een paar millimeter speling maar meer ook niet. Die exact bepalen welke functionaliteit mag worden aangeklikt en welke data mag worden ingevuld en anders niet.

Gegeven de Law of requisite variety1 moet ieder systeem, ook het stelsel van controls dat de organisatie bepaalt, echter voldoende flexibel zijn om onverwachte input uit de omgeving te kunnen verwerken. Of de controls nou richtinggevend zijn oftewel aansturen met objectives, KPIs et al., of dat ze negatief, beperkend zijn in het hoe en waarlangs, ze moeten ruimte laten om niet alleen in de huidige complexiteit van de omgeving te passen, maar ook de toekomstige omgeving aankunnen. Stelt u zich voor; anders zou de implementatie van een SAP-systeem2 al verouderd zijn op het moment dat het wordt uitgerold…!

Oh. Tsja. Q.E.D.

Ja, vroeger, toen konden we nog toe met treinen, voor bulktransport van A naar B. Maar de wereld eist nu eenmaal wat meer flexibiliteit in het Van en Naar. Dus hebben we auto’s en vrachtauto’s. Zo veel flexibeler, maar met nog steeds de risico’s van koersverlies.

Dat betekent dus ook dat we geen rails, maar vangrails nodig hebben. Zodat medewerkers in een organisatie hun werk kunnen doen, binnen de stippellijntjes van hun rijstrook kunnen blijven én zo nodig van rijstrook kunnen wisselen. Ja, ook u mag naar rechts om ruimte te maken voor mensen met haast. Mocht uw mobiel uitvallen, of mocht u niet heel vaardig zijn in het rijden, of er moet een ambulance over de middenbaan, dan is er nog een gereguleerde opvang van vluchtstroken. Geen centje pijn, die zijn opvang voor niet al te grote afzwaaiers. (En, ook niet onbelangrijker, als u een telefoontje krijgt dat de afspraak op een andere locatie is, dan zijn er vele afslagen om te nemen zonder van de weg te raken.)

Met de vangrails als ‘uiterste’ redmiddel3. Inderdaad, helemaal zonder control gaat het niet, of waarom zouden we asfalt hebben?

Hoe zetten we dan de vangrails in onze controls-wereld? A. Niet, (de illusie van) totale Kontroll muss sein; B. Door marge te laten, door niet op ieder punt de perfecte zero-sigma acties toe te staan. Hint: B is het goede antwoord.

Wie denkt A te implementeren, zal bedrogen uitkomen. Zie de requisite variety: krampachtig vast-houden aan strakke regels gaat een tijdje goed, maar onder water wordt er heus al afgeweken én het gaat een keer klappen4. En dus gaan we wéér een rondje processen implementeren, ITIL, IAM of een ISMS (laten) uitrollen of zo. Want dan organiseren we de boel toch gewoon opnieuw, naar de laatste stand (!) van zaken? Zie boven ad SAP. Het kost wat, maar dan heb je ook wat, heel even. Daarna begint het ‘erin proppen’, met alle verlies van kwaliteit en pleister-functionaliteit of -systemen (daar hebben we Excel weer) van dien.

Ja B is duidelijk beter op de langere termijn. Maar Hoe Dan? Tsja. Daarop is het antwoord nog niet zo heel duidelijk. RPA is een begin, mits ‘goed’ geïmplementeerd zodat meegroei (door blijven leren) met veranderende omstandigheden is ingebouwd. Daar is machine learning bij nodig ja, en mense-lijke bijsturing. Met alle plussen en minnen van dien5, maar in ieder geval met een verminderde behoefte aan eager beaver ‘managers’ die vooral op de procedure en dus noodzakelijkerwijs minder op de prestatie focussen. Ook RPA blijft echter vaak hangen op het vooral in al te vast gebaande, gewenste paden gieten van wat er uit de omgeving aankomt.

Het hoe dan is vooralsnog niet categorisch te beantwoorden. We hebben in ons vakgebied nu eenmaal nog te weinig ervaring met het faciliteren van bewegingsruimte. Wat we wél weten, is dat er in de medische industrie al een tijdje gebruik wordt gemaakt van pacemakers voor het reguleren van wat het hart zoal doet. Wellicht waren de eerste exemplaren absoluut aansturend op een enkele hartslag, maar dat is allang niet meer het geval. Er zijn integendeel een aantal typen, voor diverse functies.

Zo is er de ‘klassieke’ (dus niet, gelukkig) pacemaker die vooral de hartslag, de frequentie, binnen een instelbare bandbreedte houdt. Niet minder dan 50, niet meer dan 100 (met marge). Met continu monitoring maar als de hartslag daarbinnen blijft, is er geen reden om in te grijpen door het ‘ikke-doen aan de kant’ overnemen van de frequentie-aansturing. Maar wordt de frequentie te ontspan-nen, dan wordt bijgestuurd. En dat kan ook op basis van ‘externe’ signalen. Bijvoorbeeld als ‘inspanning’ wordt gedetecteerd en het hart niet mee wil, dan wordt de ‘bodem’ opgetrokken om het hart toch over de drempel van actie te duwen. Terugwaarts dus eigenlijk de omhooggeschoven bandbreedte weer in.

En er is de ‘ingebouwde AED’. Een stuk complexer, want die kijkt vooral naar de opbouw van het ritme; hartkamers eerst, hartboezems daarna, met rechts een lichte voorsprong op links6. Waarbij enige afwijking en variatie in de toch al kleine verschillen is toegestaan en ingrijpen nog steeds nogal ingrijpend7 is dus niet zo snel zal worden gedaan. Maar als er echt sprake is van een hartaanval, dan volgt de koude herstart.

Typische vangrails, nietwaar? Laissez-faire tot we het niet meer kunnen toestaan.

En uiteraard zijn er combinatiemogelijkheden. Die zullen we in uw organisatie ook zeker wel nodig hebben tenslotte. Als u uzelf keurig aan de regels houdt, kent u genoeg collega’s die proberen hun werk af te maken.

Kennen we in ons cyberbeheersingsvak ook zulke controls? Ingeplant, ingebouwd in de organisatie-procedures? Zouden we die niet eens moeten ontwikkelen?

Enne, kunnen we uit de accountancy misschien nog wat leren? Jazeker, als altijd. Maar dan hebben we het meer over process mining in de interim, om processen te analyseren. Typisch requisite variety, die we dan zien. De Happy Flow is omgeven met een wolk niet-standaardtransacties. Waarop de RA een inschatting maakt van de mate van beheersing. En dan – niet zo veel. Hooguit de conclusie dat extra substantieve controle nodig is en/of voor de management letter, dat de procedures moeten worden aangehaald.

In de accountancy, en in de procesindustrie waar het control-denken al zo veel ouder en verder ontwikkeld is, kennen we ook het idee van bandbreedtes, toegestane speling of hoe het mag heten. Materialiteit van afwijkingen, weet u nog? Zeker in een verf(blikjes)fabriek wordt gestuurd op marges qua receptuur en afvulling. Met continu bewaking en feedback-lussen om te kunnen bijsturen. Maar het bijsturen, kunnen wij dat in onze wereld al? Hebben wij de juiste middelen? Ja, een bevinding doen dat een control die er niet is of niet goed werkt, alsnog goed moet worden ge(her)imple-menteerd, maar dat is niet hetzelfde hè? Zeker niet als de verffabriek wordt bijgestuurd wegens variatie in de inputs – de receptuur, de processtappen blijven toch gelijk.

Kortom, we hebben ze nog niet, de pacemaker-controls, de bewakers van de bandbreedte. Maar we moeten ze beslist willen, omdat we ons anders onmogelijk maken met onze huidige controls, de rails. Let wel; laten we zorgen voor dynamische grenzen aan de (process mining-)transactiewolken, logging etc. Zodat we, om maar iets te noemen, materiele afwijkingen gesignaleerd krijgen en kunnen kijken wat er aan de hand is. Én/of die te-groot dreigende afwijkingen kunnen worden bijgestuurd zodat we er niet pas na jaareinde tegenaan lopen.

En onze opdrachtgevers, de leidinggevenden van organisaties, willen niet met de trein op rails; de Eerste Klas is al overvol en beperkt de vrije route- en reistijdenkeuze te zeer. De snelweg daarentegen…

Maar afgezien van ‘maximaal tweemaal fout inloggen’ (jawel) als vrij simpele ‘marge met niet-verder-grens’ en afgezien van even domme input-formatchecks8: Wie weet er in onze cyberwereld zulke controls ..?

- 1 https://en.wikipedia.org/wiki/Variety_(cybernetics)#Law_of_requisite_variety

- 2 Als bekend voorbeeld, zonder vendor-voor- of -afkeur.

- 3 Oh en laten we niet vergeten ook remmen te implementeren, zodat al te wild of uit de hand lopend rijgedrag tot bedaren te brengen is. Typisch een control die er wel is, maar in het normaal autorijden niet hindert. De remschijven zijn niet doorlopend aanlopend, hopelijk…

- 4 Nassim Taleb had een aantal voorbeelden daarvan. Onder andere: Als iedereen zich op de schouders klopt omdat nu echt de inflatie onder controle is, of de beurskoersen lekker stabiel blijven stijgen, dan is dat zowat een zeker teken dat een grote klap nabij is! Zie https://maverisk.nl/pacemakers-voor-control/ voor andere voorbeelden.

- 5 Zie https://www.norea.nl/magazine/kennisgroep-robotic-process-automation en https://www.norea.nl/magazine/virtuele-medewerkers-in-control en vele andere.

- 6 Auteur leert nog eens wat, van zijn eigen casus…

- 7 Als het écht nodig is, wordt er een typische-AED-klap afgegeven.

- 8 In wezen een control op Integrity – een geheel aparte categorie want bedoelen we in security nou integriteit van software en/of van data? Dat laatste is toch een zorg van de functionaliteit-gebruikers daar hebben wij toch niks mee? Hier valt nog wel het een en ander over uit te weiden…

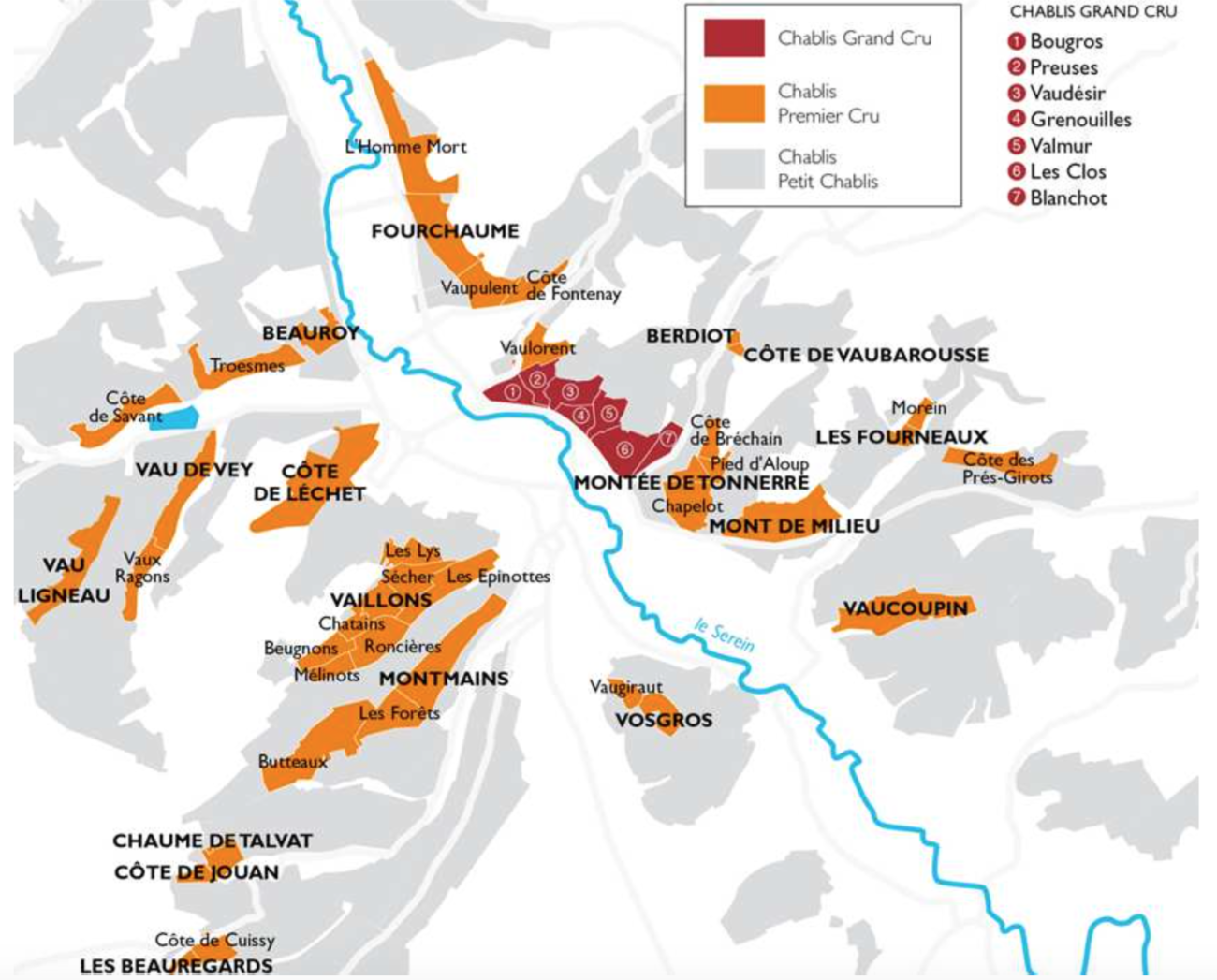

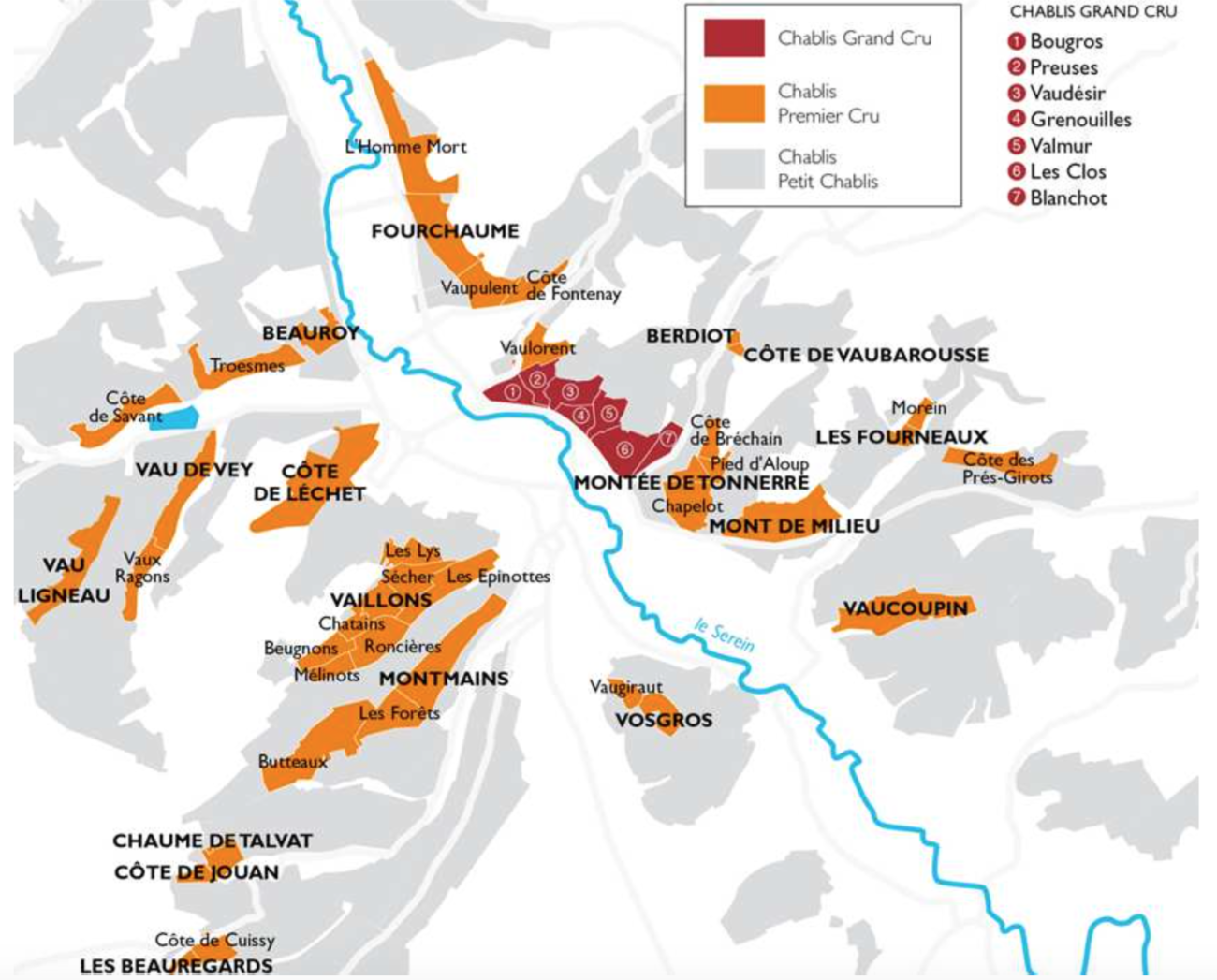

Oh en uiteraard nog als dank voor de blikken en dozen uw aandacht:

Ook interessant. Zonder reden maar verwijzend naar deze, uiteraard. Of ..?

First off, the yellow-golden almost amber colour would promise some sweet almost creamy elements. But with a delightfully tingle from a fresh mousse, and a light bitter-tartness in the nose, the picture turns around completely. There’s Mirabelle ..!

First off, the yellow-golden almost amber colour would promise some sweet almost creamy elements. But with a delightfully tingle from a fresh mousse, and a light bitter-tartness in the nose, the picture turns around completely. There’s Mirabelle ..!

[Parasolletje op, boekje erbij; een frisse duik en een flesje van het lokale brouwsel bij de hand: Het leven is zwaar in Viviers-sur-Artaut…?]

[Parasolletje op, boekje erbij; een frisse duik en een flesje van het lokale brouwsel bij de hand: Het leven is zwaar in Viviers-sur-Artaut…?]